将数据库phx中的tree表的数据导入到Hive中

命令:

1 | sqoop import --connect jdbc:mysql://node1:3306/phx \--username root \--table tree \--hive-import \--hive-overwrite \--create-hive-table \--hive-table tree1 \--target-dir /sqoop/tree3 |

结果:

1 | [root@node1 conf]# sqoop import --connect jdbc:mysql://node1:3306/phx \--username root \--table tree \--hive-import \--hive-overwrite \--create-hive-table \--hive-table tree1 \--target-dir /sqoop/tree3 |

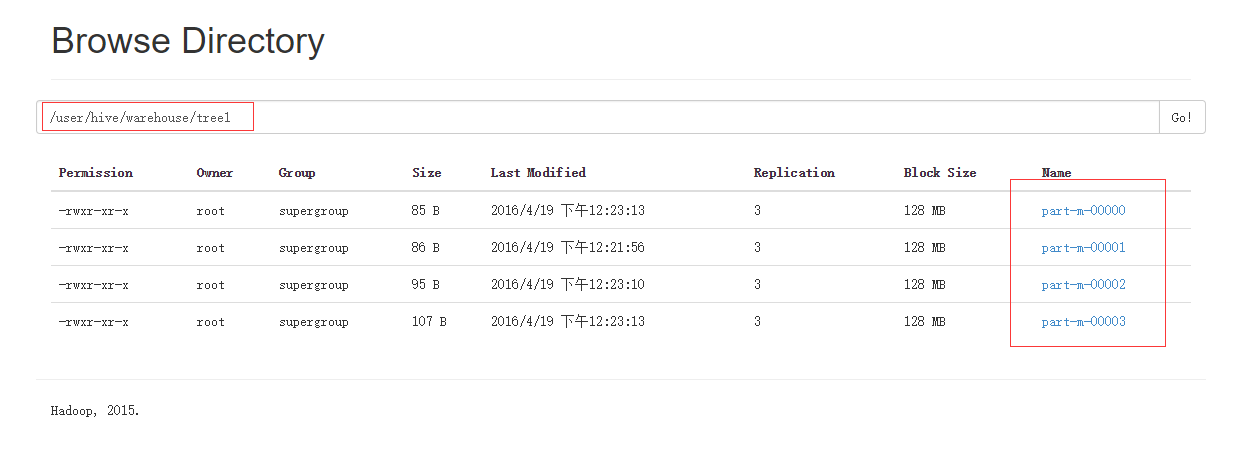

浏览HDFS如下图:

在Hive中检查是否有数据:

1 | hive> select * from tree1; |

参数说明

| 参数 | 说明 |

|---|---|

| –hive-home |

Hive的安装目录,可以通过该参数覆盖掉默认的hive目录 |

| –hive-overwrite | 覆盖掉在hive表中已经存在的数据 |

| –create-hive-table | 默认是false,如果目标表已经存在了,那么创建任务会失败 |

| –hive-table | 后面接要创建的hive表 |

| –table | 指定关系数据库表名 |